Nouns is a protocol for proliferating Nouns.

Introduction

Noun protocol is a suite of smart contracts that generates and auctions a Noun every day. The protocol serves as an open standard for generative art - Nouns. Because all of the art was released under the public domain, developers can build without restriction on the protocol at the smart contract or application layer.

This documentation describes the mechanisms that power the Nouns protocol and what each component entails. If you have any questions, please reach out at https://discord.gg/nouns

Smart contract overview

The Noun protocol consists of three primary components: Nouns, auctions and the DAO. The suite of smart contracts gives you full access to the auctions and generative art that powers the protocol.

Contracts

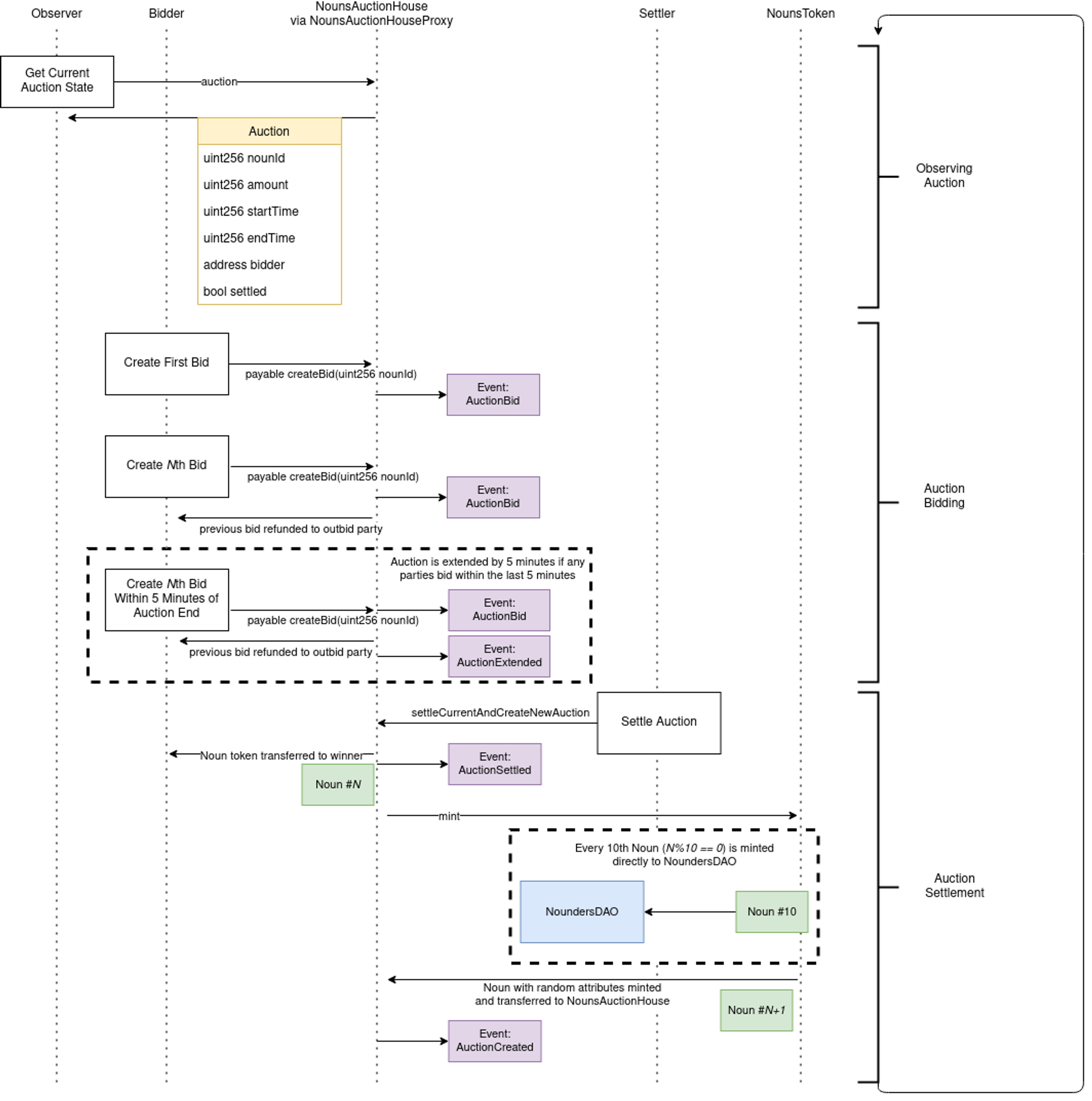

Auction lifecycle

Every auction begins where the last one ends. On settlement of an auction, a new Noun is minted and the next auction is created, all in the same transaction.

Timing Diagram

Settlement

To settle an auction, the NounsAuctionHouse contract is called. The function settleCurrentAndCreateNewAuction() both settles and creates a new auction:

function settleCurrentAndCreateNewAuction() external override nonReentrant whenNotPaused {

_settleAuction();

_createAuction();

}The _settleAuction() function checks that the current auction has begun, that it has not been settled previously and that the auction time has ended:

function _settleAuction() internal {

INounsAuctionHouse.Auction memory _auction = auction;

require(_auction.startTime != 0, "Auction hasn't begun");

require(!_auction.settled, 'Auction has already been settled');

require(block.timestamp >= _auction.endTime, "Auction hasn't completed");

auction.settled = true;

....

}If settlement is valid, the Noun is transferred from the NounAuctionHouse to the winning bidder's address:

nouns.transferFrom(address(this), _auction.bidder, _auction.nounId);In the case that there are no bids, the noun will be burned by transferring the token to the zero address:

_burn(nounId);Creating a new auction

Creating a new auction happens on the same transaction as the settlement of the previous auction. From the NounsAuctionHouse contract, _createAuction() calls the NounsToken contract to mint a new Noun (which transfers it into NounsAuctionHouse for escrow) and then uses the newly minted nounId to create a new Auction .

function _createAuction() internal {

try nouns.mint() returns (uint256 nounId) {

uint256 startTime = block.timestamp;

uint256 endTime = startTime + duration;

auction = Auction({

nounId: nounId,

amount: 0,

startTime: startTime,

endTime: endTime,

bidder: payable(0),

settled: false

});

emit AuctionCreated(nounId, startTime, endTime);

} catch Error(string memory) {

_pause();

}

}The now active auction is stored in storage and available for anyone to view.

contract NounsAuctionHouse {

...

// The active auction

INounsAuctionHouse.Auction public auction;

...

} The Auction has the following properties:

struct Auction {

// ID for the Noun (ERC721 token ID)

uint256 nounId;

// The current highest bid amount

uint256 amount;

// The time that the auction started

uint256 startTime;

// The time that the auction is scheduled to end

uint256 endTime;

// The address of the current highest bid

address payable bidder;

// Whether or not the auction has been settled

bool settled;

}NounsAuctionHouseProxy contract on Etherscan and click on auction to see the current live auction!Creating bids

A bid can be created by anyone so long as three requirements are met:

- Valid auction: the Noun the bid is being created for is up for auction in the current auction.

- Active auction: the current auction has not ended.

- Minimum bid: the amount of ETH sent in the transaction is greater than the minimum bid.

function createBid(uint256 nounId) external payable override nonReentrant {

INounsAuctionHouse.Auction memory _auction = auction;

require(_auction.nounId == nounId, 'Noun not up for auction');

require(block.timestamp < _auction.endTime, 'Auction expired');

require(msg.value >= reservePrice, 'Must send at least reservePrice');

require(

msg.value >= _auction.amount + ((_auction.amount * minBidIncrementPercentage) / 100),

'Must send more than last bid by minBidIncrementPercentage amount'

);

...

}10 ETH and the minBidIncrementPercentage is 2, the minimum bid will be 10 * 1.02 or 10.2 ETH.If the bid is valid, the last bidder's bid amount will be returned:

...

address payable lastBidder = _auction.bidder;

// Refund the last bidder, if applicable

if (lastBidder != address(0)) {

_safeTransferETHWithFallback(lastBidder, _auction.amount);

}

...If the bid is placed within a certain time frame before the auction's end time, the auction's end time will be extended. This time frame is the timeBuffer property on the NounsAuctionHouse contract which can be modified by the NounsDAO.

// The minimum amount of time left in an auction after a new bid is created

uint256 public timeBuffer;Generative on-chain art

All Nouns art work lives on chain - we do not utilize pointers to other networks such as IPFS. This is possible because Noun parts are compressed and stored on-chain using a custom run-length encoding (RLE), which is a form of lossless compression.

While the NounsToken contract handles the minting of Nouns, the NounsSeeder and NounsDescriptor contracts handle the determination of Noun attributes and rendering of the artwork, accordingly.

Noun attributes

All Noun's attributes are determined by a Noun Seed :

struct Seed {

uint48 background;

uint48 body;

uint48 accessory;

uint48 head;

uint48 glasses;

}The Seed is a collection of part indexes that can be randomly generated using the NounsSeeder contract by calling the generateSeed function below. The pseudo-random number used to determine noun parts is computed by hashing the previous blockhash and passed in nounId. Seed generation is not truly random - it can be predicted if you know the previous block's blockhash .

The second argument is the address of the INounsDescriptor or the contract in charge of storing and rendering the art work (more on that below).

function generateSeed(uint256 nounId, INounsDescriptor descriptor) external view override returns (Seed memory) {

uint256 pseudorandomness = uint256(

keccak256(abi.encodePacked(blockhash(block.number - 1), nounId))

);

uint256 backgroundCount = descriptor.backgroundCount();

uint256 bodyCount = descriptor.bodyCount();

uint256 accessoryCount = descriptor.accessoryCount();

uint256 headCount = descriptor.headCount();

uint256 glassesCount = descriptor.glassesCount();

return Seed({

background: uint48(

uint48(pseudorandomness) % backgroundCount

),

body: uint48(

uint48(pseudorandomness >> 48) % bodyCount

),

accessory: uint48(

uint48(pseudorandomness >> 96) % accessoryCount

),

head: uint48(

uint48(pseudorandomness >> 144) % headCount

),

glasses: uint48(

uint48(pseudorandomness >> 192) % glassesCount

)

});

}Seed directly if you know which parts you want!NounsToken.seed property. Head over to the verified NounsToken contract on Etherscan and click on #26 (the seeds property). Input any noun id and you'll get the seed that determines its traits!On-chain art

Encoding

Noun 'parts' are compressed using run-length encoding and stored in the NounsDescriptor contract.

Consecutive, same-color pixels in each row of the image are grouped together. These compressed pixel representations are then drawn as SVG rectangles to form a Noun.

The algorithm used by Nouns is optimized for the creation of composite images, where the majority of the pixels in each input image are transparent. This is accomplished by defining a bounding box around the content in each image and only encoding the pixels inside the box.

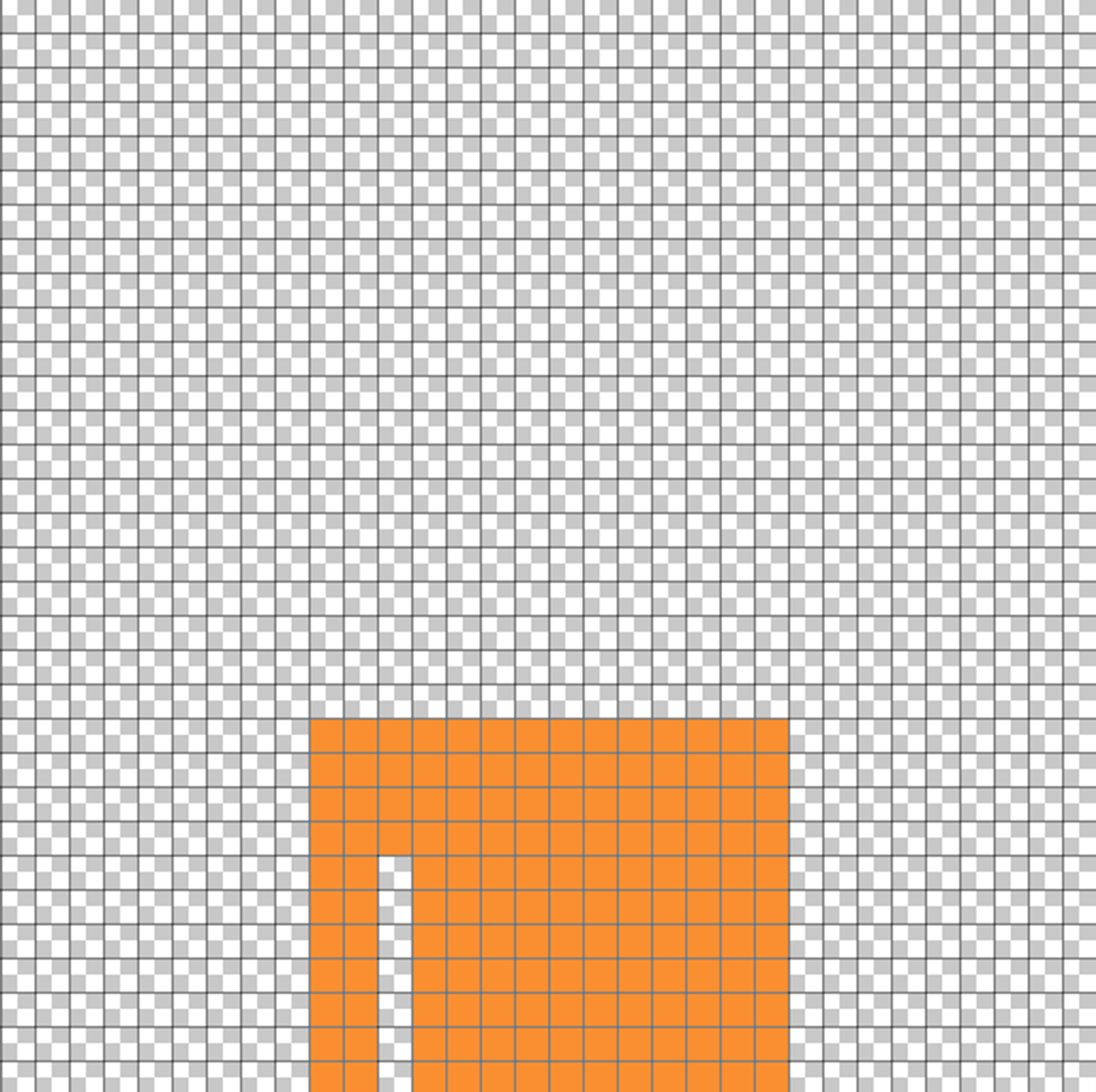

Let's use this body as an example:

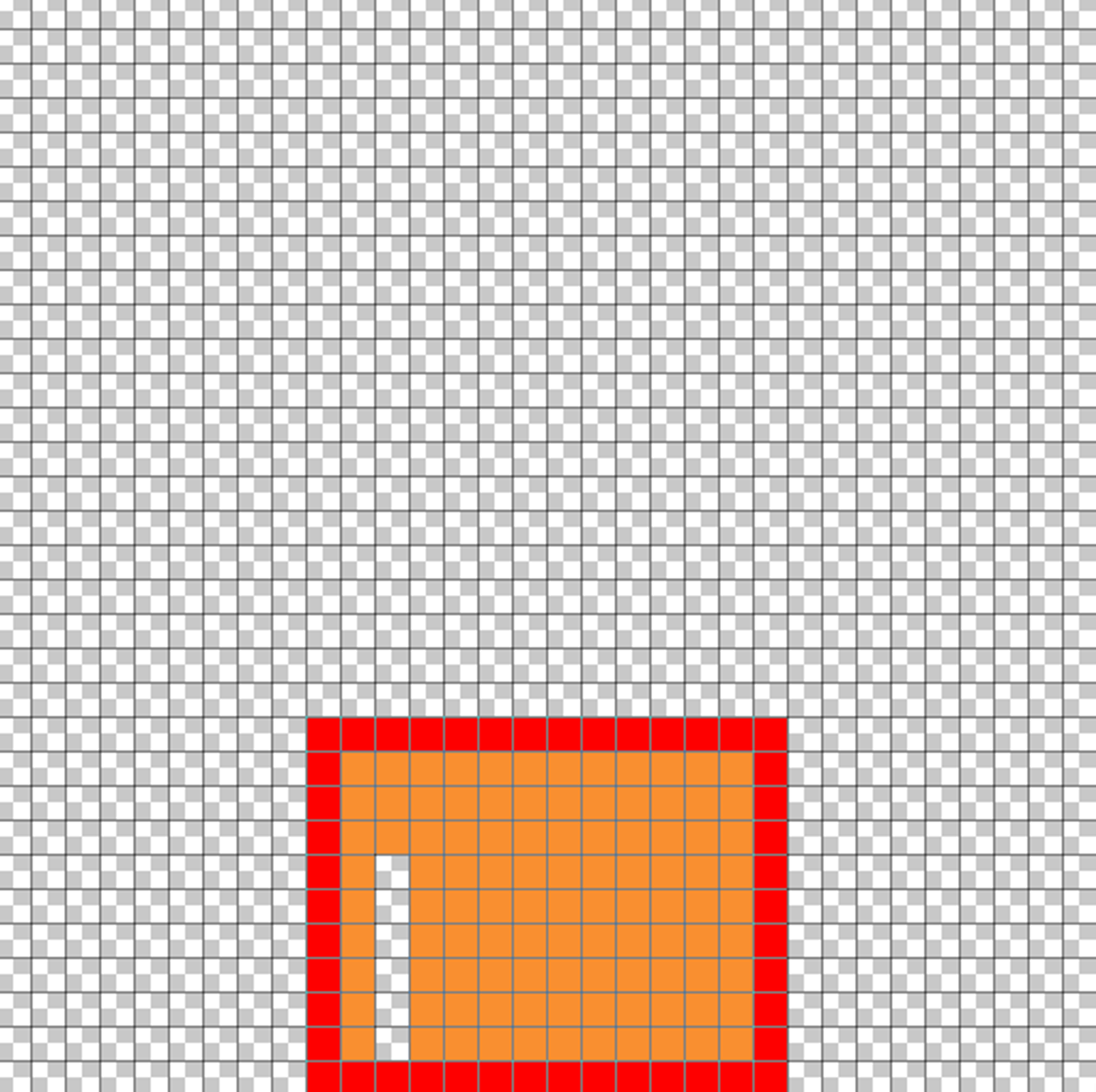

The majority of this 32x32 pixel grid is empty and therefore doesn't need to be drawn. Rather than include all transparent pixels in our runs, we'll define a bounding box that excludes all pixels outside the actual content:

For the above image, the bounding box consists of the following values:

21(top)

23(right)

31(bottom)

9(left)

When drawing Nouns, we start at the top-left of the bounding box and draw each row from top to bottom. We need two pieces of data to draw every SVG rectangle:

- The length in pixels

- The color

To save space, each color is represented as a number, which gives us enough information to look up the hex color value. In this example, assume the number representation of orange is 17 and transparent is 0.

With that assumption, the encoding is as follows:

First row:

14 (rectangle length) | 17 (color index)

Entire body:

14|17 14|17 14|17 14|17 02|17 01|00 11|17 02|17 01|00 11|17 02|17 01|00 11|17 02|17 01|00 11|17 02|17 01|00 11|17 02|17 01|00 11|17 02|17 01|00 11|17

As a final step, the bounds and pixel data are concatenated, and a 'color palette index' is prepended to the string. This index is a number that tells the renderer where to access the colors for the part. This allows us to support a large number of possible colors, while using as little storage as possible. The entire string is then hex-encoded.

That's it! The hex-encoded data for all Noun parts can be found in the image-data.json file inside the Nouns repository.

Rendering

In this context, 'rendering' means converting the run-length encoded Noun parts to an SVG image that can be viewed on any computer. This conversion is 100% on-chain and occurs in the MultiPartRLEToSVG library.

The process is simple - loop over selected parts, parse the image data, and draw all SVG rectangles.

To draw each part, we start at the top-left of the bounding box and loop through all rects, concatenating at several depths to reduce gas usage. It's easiest to explain this process by adding comments in the source code of the _generateSVGRects function:

// This is a lookup table that enables very cheap int to string

// conversions when operating on a set of predefined integers.

// This is used below to convert the integer length of each rectangle

// in a 32x32 pixel grid to the string representation of the length

// in a 320x320 pixel grid, which is the chosen dimension for all Nouns.

// For example: A length of 3 gets mapped to '30'.

string[33] memory lookup = [

'0', '10', '20', '30', '40', '50', '60', '70',

'80', '90', '100', '110', '120', '130', '140', '150',

'160', '170', '180', '190', '200', '210', '220', '230',

'240', '250', '260', '270', '280', '290', '300', '310',

'320'

];

// The string of SVG rectangles

string memory rects;

// Loop through all Noun parts

for (uint8 p = 0; p < params.parts.length; p++) {

// Convert the part data into a format that's easier to consume

// than a byte array.

DecodedImage memory image = _decodeRLEImage(params.parts[p]);

// Get the color palette used by the current part (`params.parts[p]`)

string[] storage palette = palettes[image.paletteIndex];

// These are the x and y coordinates of the rect that's currently being drawn.

// We start at the top-left of the pixel grid when drawing a new part.

uint256 currentX = image.bounds.left;

uint256 currentY = image.bounds.top;

// The `cursor` and `buffer` are used here as a gas-saving technique.

// We load enough data into a string array to draw four rectangles.

// Once the string array is full, we call `_getChunk`, which writes the

// four rectangles to a `chunk` variable before concatenating them with the

// existing part string. If there is remaining, unwritten data inside the

// `buffer` after we exit the rect loop, it will be written before the

// part rectangles are merged with the existing part data.

// This saves gas by reducing the size of the strings we're concatenating

// during most loops.

uint256 cursor;

string[16] memory buffer;

// The part rectangles

string memory part;

for (uint256 i = 0; i < image.rects.length; i++) {

Rect memory rect = image.rects[i];

// Skip fully transparent rectangles. Transparent rectangles

// always have a color index of 0.

if (rect.colorIndex != 0) {

// Load the rectangle data into the buffer

buffer[cursor] = lookup[rect.length]; // width

buffer[cursor + 1] = lookup[currentX]; // x

buffer[cursor + 2] = lookup[currentY]; // y

buffer[cursor + 3] = palette[rect.colorIndex]; // color

cursor += 4;

if (cursor >= 16) {

// Write the rectangles from the buffer to a string

// and concatenate with the existing part string.

part = string(abi.encodePacked(part, _getChunk(cursor, buffer)));

cursor = 0;

}

}

// Move the x coordinate `rect.length` pixels to the right

currentX += rect.length;

// If the right bound has been reached, reset the x coordinate

// to the left bound and shift the y coordinate down one row.

if (currentX == image.bounds.right) {

currentX = image.bounds.left;

currentY++;

}

}

// If there are unwritten rectangles in the buffer, write them to a

// `chunk` and concatenate with the existing part data.

if (cursor != 0) {

part = string(abi.encodePacked(part, _getChunk(cursor, buffer)));

}

// Concatenate the part with all previous parts

rects = string(abi.encodePacked(rects, part));

}

return rects;

Accessing Image Data

Once the entire SVG has been generated, it is base64 encoded. The token URI, which contains the inlined token name, description, and image is also base64 encoded. This allows us to encode characters that aren't allowed as part of a URI.

The tokenURI function on the NounsDescriptor accepts two arguments: tokenId and seed. The NounsToken contract calls this function using seed information from its storage to get the corresponding token data:

function tokenURI(uint256 tokenId, INounsSeeder.Seed memory seed) external view returns (string memory);A second function is exposed that allows you to generate the SVG image for any possible Noun using an arbitrary seed:

function generateSVGImage(INounsSeeder.Seed memory seed) external view returns (string memory);A successful response will return a base64 encoded string representation of the SVG image, which looks like the following:

PHN2ZyB3aWR0aD0iMzIwIiBoZWlnaHQ9IjMyMCIgdmlld0JveD0iMCAwIDMyMCAzMjAiIHhtbG5zPSJodHRwOi8vd3d3LnczLm9yZy8yMDAwL3N2ZyIgc2hhcGUtcmVuZGVyaW5nPSJjcmlzcEVkZ2VzIj48cmVjdCB3aWR0aD0iMTAwJSIgaGVpZ2h0PSIxMDAlIiBmaWxsPSIjZDVkN2UxIiAvPjxyZWN0IHdpZHRoPSIxNDAiIGhlaWdodD0iMTAiIHg9IjkwIiB5PSIyMTAiIGZpbGw9IiM2M2EwZjkiIC8+PHJlY3Qgd2lkdGg9IjE0MCIgaGVpZ2h0PSIxMCIgeD0iOTAiIHk9IjIyMCIgZmlsbD0iIzYzYTBmOSIgLz48cmVjdCB3aWR0aD0iMTQwIiBoZWlnaHQ9IjEwIiB4PSI5MCIgeT0iMjMwIiBmaWxsPSIjNjNhMGY5IiAvPjxyZWN0IHdpZHRoPSIxNDAiIGhlaWdodD0iMTAiIHg9IjkwIiB5PSIyNDAiIGZpbGw9IiM2M2EwZjkiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSI5MCIgeT0iMjUwIiBmaWxsPSIjNjNhMGY5IiAvPjxyZWN0IHdpZHRoPSIxMTAiIGhlaWdodD0iMTAiIHg9IjEyMCIgeT0iMjUwIiBmaWxsPSIjNjNhMGY5IiAvPjxyZWN0IHdpZHRoPSIyMCIgaGVpZ2h0PSIxMCIgeD0iOTAiIHk9IjI2MCIgZmlsbD0iIzYzYTBmOSIgLz48cmVjdCB3aWR0aD0iMTEwIiBoZWlnaHQ9IjEwIiB4PSIxMjAiIHk9IjI2MCIgZmlsbD0iIzYzYTBmOSIgLz48cmVjdCB3aWR0aD0iMjAiIGhlaWdodD0iMTAiIHg9IjkwIiB5PSIyNzAiIGZpbGw9IiM2M2EwZjkiIC8+PHJlY3Qgd2lkdGg9IjExMCIgaGVpZ2h0PSIxMCIgeD0iMTIwIiB5PSIyNzAiIGZpbGw9IiM2M2EwZjkiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSI5MCIgeT0iMjgwIiBmaWxsPSIjNjNhMGY5IiAvPjxyZWN0IHdpZHRoPSIxMTAiIGhlaWdodD0iMTAiIHg9IjEyMCIgeT0iMjgwIiBmaWxsPSIjNjNhMGY5IiAvPjxyZWN0IHdpZHRoPSIyMCIgaGVpZ2h0PSIxMCIgeD0iOTAiIHk9IjI5MCIgZmlsbD0iIzYzYTBmOSIgLz48cmVjdCB3aWR0aD0iMTEwIiBoZWlnaHQ9IjEwIiB4PSIxMjAiIHk9IjI5MCIgZmlsbD0iIzYzYTBmOSIgLz48cmVjdCB3aWR0aD0iMjAiIGhlaWdodD0iMTAiIHg9IjkwIiB5PSIzMDAiIGZpbGw9IiM2M2EwZjkiIC8+PHJlY3Qgd2lkdGg9IjExMCIgaGVpZ2h0PSIxMCIgeD0iMTIwIiB5PSIzMDAiIGZpbGw9IiM2M2EwZjkiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSI5MCIgeT0iMzEwIiBmaWxsPSIjNjNhMGY5IiAvPjxyZWN0IHdpZHRoPSIxMTAiIGhlaWdodD0iMTAiIHg9IjEyMCIgeT0iMzEwIiBmaWxsPSIjNjNhMGY5IiAvPjxyZWN0IHdpZHRoPSIyMCIgaGVpZ2h0PSIxMCIgeD0iMTQwIiB5PSIyNDAiIGZpbGw9IiMwMDAwMDAiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSIxODAiIHk9IjI0MCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjEzMCIgeT0iMjUwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSIyMCIgaGVpZ2h0PSIxMCIgeD0iMTYwIiB5PSIyNTAiIGZpbGw9IiMwMDAwMDAiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyMDAiIHk9IjI1MCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMjAiIGhlaWdodD0iMTAiIHg9IjE2MCIgeT0iMjYwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSIxMDAiIGhlaWdodD0iMTAiIHg9IjUwIiB5PSI2MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTEwIiBoZWlnaHQ9IjEwIiB4PSIxNjAiIHk9IjYwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iNTAiIHk9IjcwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMTcwIiB5PSI3MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjIyMCIgeT0iNzAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyNjAiIHk9IjcwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iNTAiIHk9IjgwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMTAiIGhlaWdodD0iMTAiIHg9IjcwIiB5PSI4MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjE5MCIgeT0iODAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjQwIiBoZWlnaHQ9IjEwIiB4PSIyMTAiIHk9IjgwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMjYwIiB5PSI4MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjUwIiB5PSI5MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjcwIiB5PSI5MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjExMCIgeT0iOTAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIxOTAiIHk9IjkwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMjQwIiB5PSI5MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjI2MCIgeT0iOTAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSI1MCIgeT0iMTAwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iOTAiIHk9IjEwMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iNzAiIGhlaWdodD0iMTAiIHg9IjEzMCIgeT0iMTAwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSI0MCIgaGVpZ2h0PSIxMCIgeD0iMjEwIiB5PSIxMDAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyNjAiIHk9IjEwMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iNjAiIGhlaWdodD0iMTAiIHg9IjUwIiB5PSIxMTAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSIxMjAiIHk9IjExMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjE3MCIgeT0iMTEwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMjYwIiB5PSIxMTAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSI1MCIgeT0iMTIwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iNzAiIHk9IjEyMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjEzMCIgeT0iMTIwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMTUwIiB5PSIxMjAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIxNzAiIHk9IjEyMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iNjAiIGhlaWdodD0iMTAiIHg9IjE5MCIgeT0iMTIwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMjYwIiB5PSIxMjAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSI1MCIgeT0iMTMwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSI1MCIgaGVpZ2h0PSIxMCIgeD0iNzAiIHk9IjEzMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjEzMCIgeT0iMTMwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIzMCIgaGVpZ2h0PSIxMCIgeD0iMTUwIiB5PSIxMzAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyNjAiIHk9IjEzMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjUwIiB5PSIxNDAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIxMTAiIHk9IjE0MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjE3MCIgeT0iMTQwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSI4MCIgaGVpZ2h0PSIxMCIgeD0iMTkwIiB5PSIxNDAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSI1MCIgeT0iMTUwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIzMCIgaGVpZ2h0PSIxMCIgeD0iNzAiIHk9IjE1MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMzAiIGhlaWdodD0iMTAiIHg9IjExMCIgeT0iMTUwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMTUwIiB5PSIxNTAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyNDAiIHk9IjE1MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjI2MCIgeT0iMTUwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iNTAiIHk9IjE2MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjkwIiB5PSIxNjAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIxMTAiIHk9IjE2MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjEzMCIgeT0iMTYwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSI4MCIgaGVpZ2h0PSIxMCIgeD0iMTUwIiB5PSIxNjAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyNDAiIHk9IjE2MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjI2MCIgeT0iMTYwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIzMCIgaGVpZ2h0PSIxMCIgeD0iNTAiIHk9IjE3MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjkwIiB5PSIxNzAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyNjAiIHk9IjE3MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjUwIiB5PSIxODAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSI3MCIgeT0iMTgwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSI0MCIgaGVpZ2h0PSIxMCIgeD0iOTAiIHk9IjE4MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iNzAiIGhlaWdodD0iMTAiIHg9IjE0MCIgeT0iMTgwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSI1MCIgaGVpZ2h0PSIxMCIgeD0iMjIwIiB5PSIxODAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSI1MCIgeT0iMTkwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iOTAiIHk9IjE5MCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjE4MCIgeT0iMTkwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMjYwIiB5PSIxOTAiIGZpbGw9IiMyYmIyNmIiIC8+PHJlY3Qgd2lkdGg9IjIwMCIgaGVpZ2h0PSIxMCIgeD0iNTAiIHk9IjIwMCIgZmlsbD0iIzJiYjI2YiIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjI2MCIgeT0iMjAwIiBmaWxsPSIjMmJiMjZiIiAvPjxyZWN0IHdpZHRoPSI2MCIgaGVpZ2h0PSIxMCIgeD0iMTAwIiB5PSIxMTAiIGZpbGw9IiMwMDAwMDAiIC8+PHJlY3Qgd2lkdGg9IjYwIiBoZWlnaHQ9IjEwIiB4PSIxNzAiIHk9IjExMCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMzAiIGhlaWdodD0iMTAiIHg9IjEwMCIgeT0iMTIwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMTMwIiB5PSIxMjAiIGZpbGw9IiNmZjBlMGUiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSIxNDAiIHk9IjEyMCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMzAiIGhlaWdodD0iMTAiIHg9IjE3MCIgeT0iMTIwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMjAwIiB5PSIxMjAiIGZpbGw9IiNmZjBlMGUiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSIyMTAiIHk9IjEyMCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMTYwIiBoZWlnaHQ9IjEwIiB4PSI3MCIgeT0iMTMwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iNzAiIHk9IjE0MCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjEwMCIgeT0iMTQwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMTEwIiB5PSIxNDAiIGZpbGw9IiMwYWRjNGQiIC8+PHJlY3Qgd2lkdGg9IjIwIiBoZWlnaHQ9IjEwIiB4PSIxMjAiIHk9IjE0MCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjE0MCIgeT0iMTQwIiBmaWxsPSIjMTkyOWY0IiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iMTUwIiB5PSIxNDAiIGZpbGw9IiMwMDAwMDAiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIxNzAiIHk9IjE0MCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjE4MCIgeT0iMTQwIiBmaWxsPSIjMGFkYzRkIiAvPjxyZWN0IHdpZHRoPSIyMCIgaGVpZ2h0PSIxMCIgeD0iMTkwIiB5PSIxNDAiIGZpbGw9IiMwMDAwMDAiIC8+PHJlY3Qgd2lkdGg9IjEwIiBoZWlnaHQ9IjEwIiB4PSIyMTAiIHk9IjE0MCIgZmlsbD0iIzE5MjlmNCIgLz48cmVjdCB3aWR0aD0iMTAiIGhlaWdodD0iMTAiIHg9IjIyMCIgeT0iMTQwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSIxMCIgaGVpZ2h0PSIxMCIgeD0iNzAiIHk9IjE1MCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iNjAiIGhlaWdodD0iMTAiIHg9IjEwMCIgeT0iMTUwIiBmaWxsPSIjMDAwMDAwIiAvPjxyZWN0IHdpZHRoPSI2MCIgaGVpZ2h0PSIxMCIgeD0iMTcwIiB5PSIxNTAiIGZpbGw9IiMwMDAwMDAiIC8+PHJlY3Qgd2lkdGg9IjYwIiBoZWlnaHQ9IjEwIiB4PSIxMDAiIHk9IjE2MCIgZmlsbD0iIzAwMDAwMCIgLz48cmVjdCB3aWR0aD0iNjAiIGhlaWdodD0iMTAiIHg9IjE3MCIgeT0iMTYwIiBmaWxsPSIjMDAwMDAwIiAvPjwvc3ZnPg==Decode the base64, and voila:

NounsDescriptor contract directly. Head over to the verified NounsDescriptor contract on Etherscan and click on #10 (the generateSVGImage function). Input any noun seed (e.g. ([0,2,4,123,2])) to get your encoded SVG image! Governance

coming soon.